INSPIRED BY

OTHER WRITING

Back(prop) To The Future

How do you connect two things - one which you are kind of familiar (neural networks maths) with, and the other which fills you with dread (Physics)?

A quick story, before we begin. I was deeply afraid of physics in 11th grade. I would freeze up when I saw certain problems. These things were meant to be connected to the real world. It is the physical world after all, right? While trying to make sense of the all the straight line forces and the friction coefficients, my brain would reach it's limit. This is not how I saw objects behave. The way our textbooks said light travelled (straight, bending between mediums) was not how I experienced the diffused morning sunlight. It pained me to see this happen. Especially because I seemed to intuitively understand maths. At each step I saw these two subjects were connected, but I simply could not grasp it in the same way. So this connection to physics both intrigued and scared me. Here is the chance, to get at some of this physics business finally, after all these years. Let's try.

To recap, what is this brachistochrone business, firstly. It was problem, formulated by Johann Bernoulli. So there is a ball, and it has to get from point A to point B. What is the fastest path for it to do so? Disregard friction, only consider gravity (for now).

You might think it is the straight path. Easiest conclusion to come to. But the question is not the shortest path. It asks for the path which takes the least amount of time. So this lead to a new field of calculus called the calculus of variations.

It defines these things called functionals. So imagine you have a set of some of the paths the ball could take. You associate each one with the time it takes for the ball to reach from A to B. This mapping is an example of functional. It is a map from a functions f to real numbers.

Formal definition:

A functional $S[f]$ is a map from functions $f$ to the real numbers.

Now a functional has something called stationary paths. Just like a functions have stationary points, where the slope is 0. To find something similar, let's look at the above example again. The stationary path in this case would be exactly what we are looking for. Given all the functions and the associated times taken, the path with the minimum time taken is the stationary one.

In a general mathematical context:

Let $S[y]$ be a functional that maps functions $y$ that satisfy $y(a) = A$ and $y(b) = B$ to the real numbers. Any such function y(x) for which $$d(S[y + εg])/dε = 0$$ for all functions $g(x)$ that satisfy $g(a) = g(b) = 0$, is said to be a stationary path of $S$.

What this means practically is it can also be the path which takes the most amount of time, but in our case, we are looking at the minimizing one. In either case y' = constant.

So how do find this minimal path. Well, long story, but we end up with an equation, called Euler-lagrange equation

For the functional L: $$\frac{\partial L}{\partial y} - \frac{d}{dx} \left( \frac{\partial L}{\partial y'} \right) = 0$$

That weird sign is the partial derivative. Basically, if you have an equation with multiple variables and have to derivate it, the partial derivate is the one with respect to one of the selected variables.

For our brachistochrone problem, as it turns out, when you solve for this equation, you get a path called the cycloid. A cycloid is the curve traced by a point on the rim of a circular wheel as the wheel rolls along a straight line without slippage.

This is what ends up being the path which takes the minimal amount of time. Here's a video that shows it works

Pretty solid explanation - the ball needs to accelerate towards point b, without falling straight away to the ground. If it does that, it won't move towards point B. This is the path it takes.

Now, there are alternative explanations here. This is also the path that follows the "principle of least action" law, i.e it can be derived using the laws of conservation of energy. Bernoulli has a solution which connected this problem to Fermat's least time principle. He was connecting this to the law of refraction. On finding his solution, Bernoulli said

In this way I have solved at one stroke two important problems – an optical and a mechanical one – and have achieved more than I demanded from others: I have shown that the two problems, taken from entirely separate fields of mathematics, have the same character.

And so we get to the root. Functionals can be used to solve all kinds of different problems, as it turns out as well. How light travels in different mediums. How a film of soap spreads. And ofcourse, you could consider, in a neural network. As explained before, the training data is a set of functions (i.e x and y points) and the corresponding value of the loss function of your choice. For a any given function you associate it with this loss value. This is a kind of functional. If you minimize it (aka, find the stationary path for it), you have the "model" i.e the approximation that best meets our criteria.

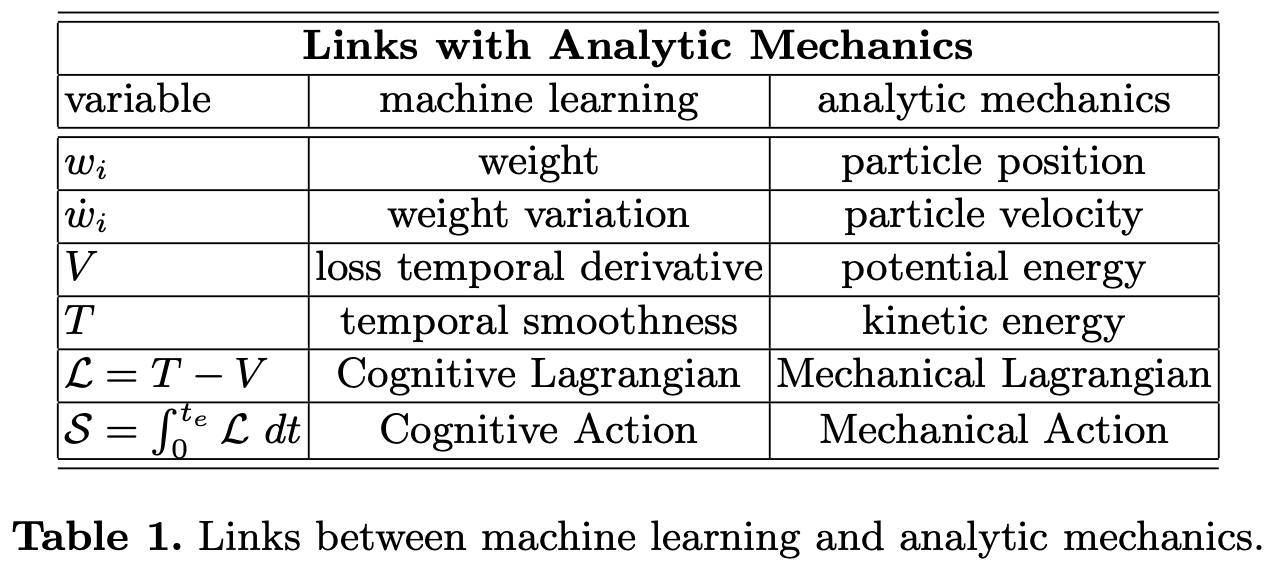

A paper I found also talks about this. It mentions that the only difference in application is that neural networks have discrete weights, whereas the physics methods involve continuous functions. It makes the following comparison for how the Euler-Lagrange equations are used in both problems-

There are also some things called Neural ODEs, that change discrete network layers to continuous functions.

Lecunn himself recognised this. "The concepts are new, if not the algorithm", he said. But I am still NOT satisfied. Okay, you give some theory to something well known. Cool? I have derived some things, sure, but nothing else has happened? How do you make this real? I still found myself unable to grasp at this damn thing.

How do we make it interesting? Well, I have this skill called designing programs? You all have heard of this? Strange thing. But I can use it to simulate things, and try to model some of this physics business? So, how about we run some simulations to see if when we try to minimise the time taken from A to B by using neural networks, does it give us the brachistochrone curve? I am not clear on how exactly would we do this, since in a normal approximation,we would know the path with minimum time (i.e it is the cycloid). How do we reach this in an open ended manner? I have no clue. Must try nonetheless.

References

1.The unreasonable effectiveness of mathematics

2.Introduction to the calculus of variations

3.Bernoulli solution

4.Neural ODEs